Jump! Did you feel it? The split-second tension in your calves? The silent command in your throat? Your brain screamed "MOVE", but your body stayed still. The command was loud. The movement was silent. That silence is data. And for my last year at university, I am building the interface to hear it.

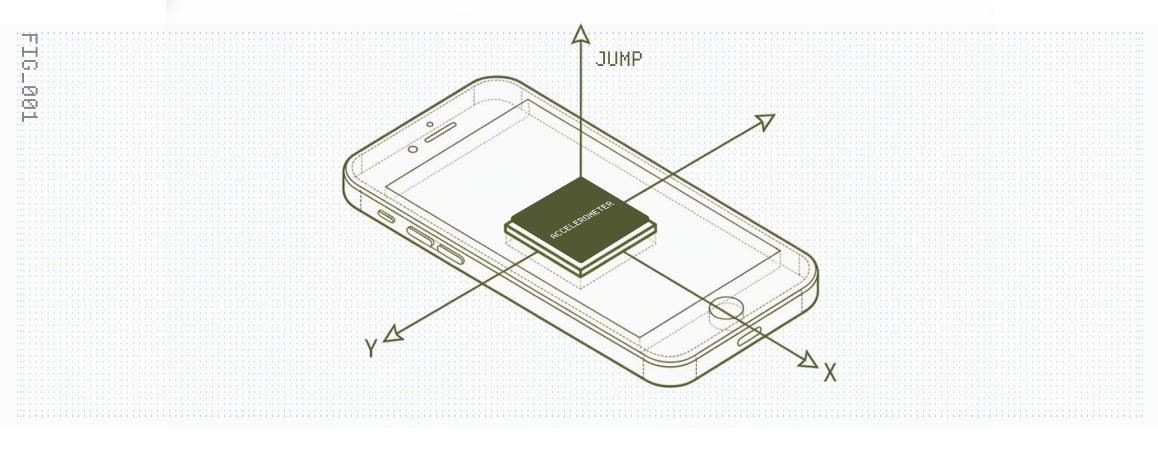

We carry supercomputers with accelerometers in our pockets, yet we tap on glass like cavemen. In Phase 1, I turned a phone into a sword. I used raw IMU data to control a video game character, proving that "motion" is just a stream of numbers waiting to be interpreted.

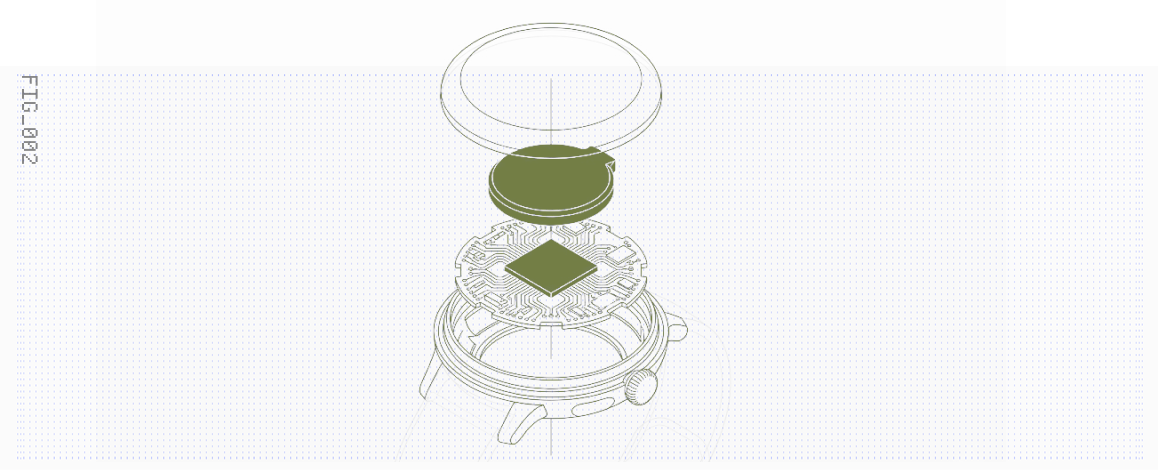

But who holds a phone while gaming? I needed it wearable. I strapped the sensor to my wrist and taught a machine to know the difference between a punch and a wave. I built a "Press-to-Label" system to solve the data collection problem, proving that good ML starts with good data engineering.

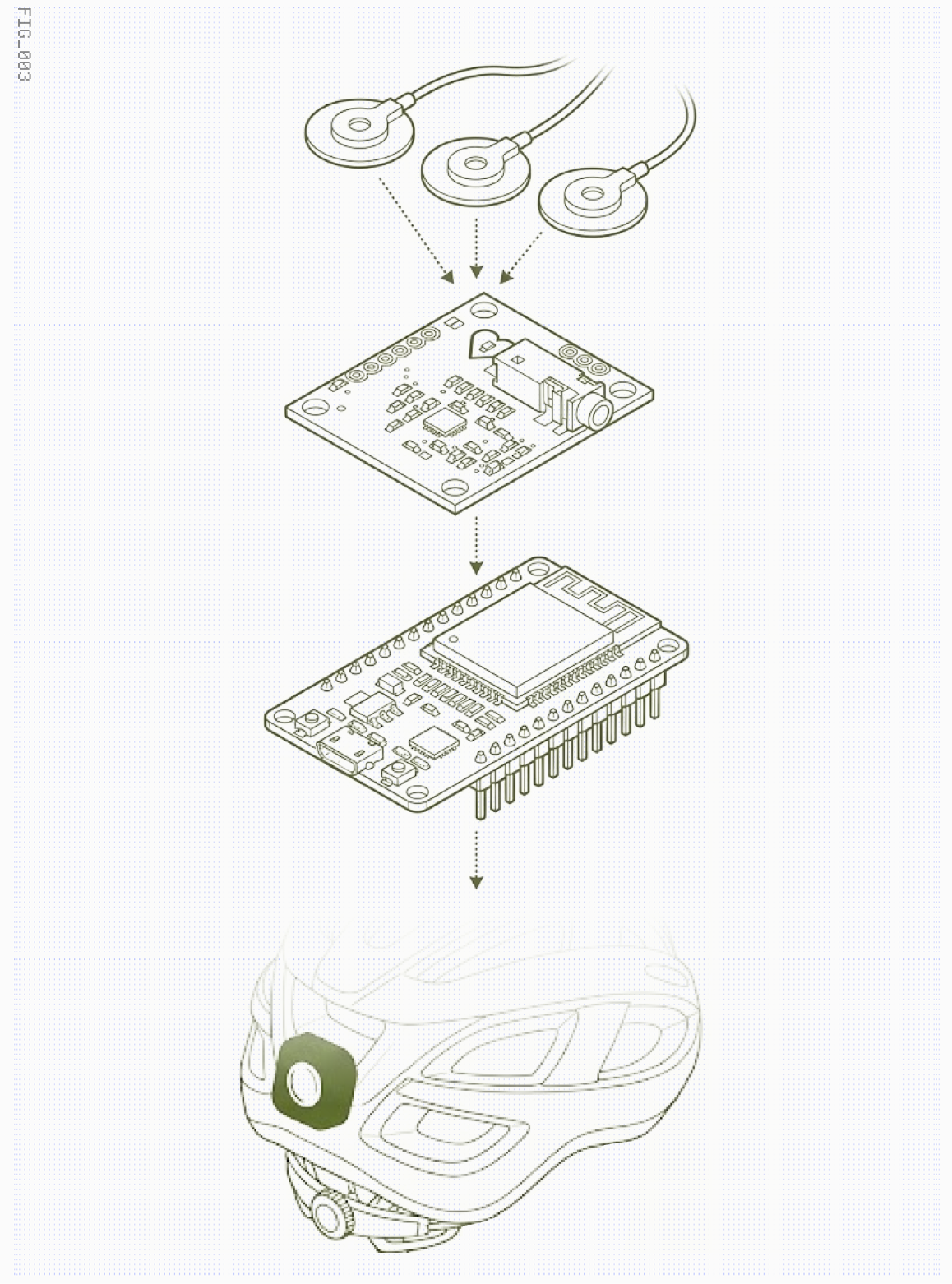

Though... motion is crude. It suffers from the "friction between having a thought and the physical action required to input it." I wanted the spark. Phase 3 began as a hands-free cyclist turn signal, detecting the specific flex of the Flexor Digitorum Profundus leading to the pinky finger. I benchmarked 18 architectures on a "Ladder of Abstraction" (detailed in Technical Narrative), from simple variance thresholds to combining Inception blocks, Bi-LSTMs, and Multi-Head Attention.

The surprise? Deep Learning failed (49.6%). Random Forest won (74.3% at 0.01ms latency). This validated a core Ubicomp thesis: on the edge, feature engineering > raw compute.

Why move at all? Speak without sound. Phase 4 replicates the MIT Media Lab's AlterEgo (Arnav Kapur, Pattie Maes). By placing electrodes on the jaw, I capture Neuromuscular Signals—the "echoes" of internal speech on the skin. This is a Peripheral Nerve Interface, detecting the intent to speak before sound leaves your mouth. The insight? Muscles sound like hearts. I hacked a simple heart sensor (AD8232) to capture the subvocal frequency range (1.3-50Hz), democratizing the Silent Speech Interface with off-the-shelf components.

The ultimate interface is silence.

CP194 Capstone · Minerva University · Spring 2026

SOMACH

A $40 dual-channel sEMG system for silent speech classification.

2 studies · 3 arXiv papers · 4,033 CSVs · open-source.

12 slides. The academic oral defense version — research question, hardware, methodology, key findings (onset burst + electrode orthogonality), results, and next steps. Built for a 10-minute presentation.

Open Slides Full Journey Extended Journey Deck22+ slides. The full story — from Phase 0 EEG failure through Phase 4, including the Biological HUD long-term vision, side quests, publications, and tools built along the way.

Open Slides